These comments are an adjunct to David England’s excellent presentation to Connections UK 2015 on the UK Army’s Light Role Battlegroup Experiment, conducted on behalf of Capability Directorate Combat by Niteworks. As David explained, wargaming enabled by manual simulation was central to his Analysis and Experiment (A&E) approach. Because the experiment focussed on battlegroups we could model aggregated force elements (FEs), usually representing these at sub-unit (company/squadron) level or, occasionally, as platoons/troops. Hence the supporting Operational Analysis (OA) was predicated on aggregated modelling, generally using force ratios to inform military judgement with respect to likely combat outcomes. This ‘big handful’ approach was also applied to terrain; while we took account of broad ranges, rates of advance, terrain types etc, we did not get into detailed Line of Sight (LOS), individual weapon ranges and so forth.

That worked fine, as David explained at Connections UK. But I was wary of a level of tactical detail at which an aggregated approach in a manual simulation would be unlikely to remain fit for purpose: when you have to consider individual platforms’ capabilities, single shot kill probability (SSKP), micro-terrain etc. Hence I introduced an ‘aggregated vs tactical weeds modelling line’ into the planning discussions, below which we should not stray. Aggregation works if you are modelling above this line, but not if you are working below it in the ‘tactical weeds’ of SSKPs, LOS etc. This is a significant distinction.

.

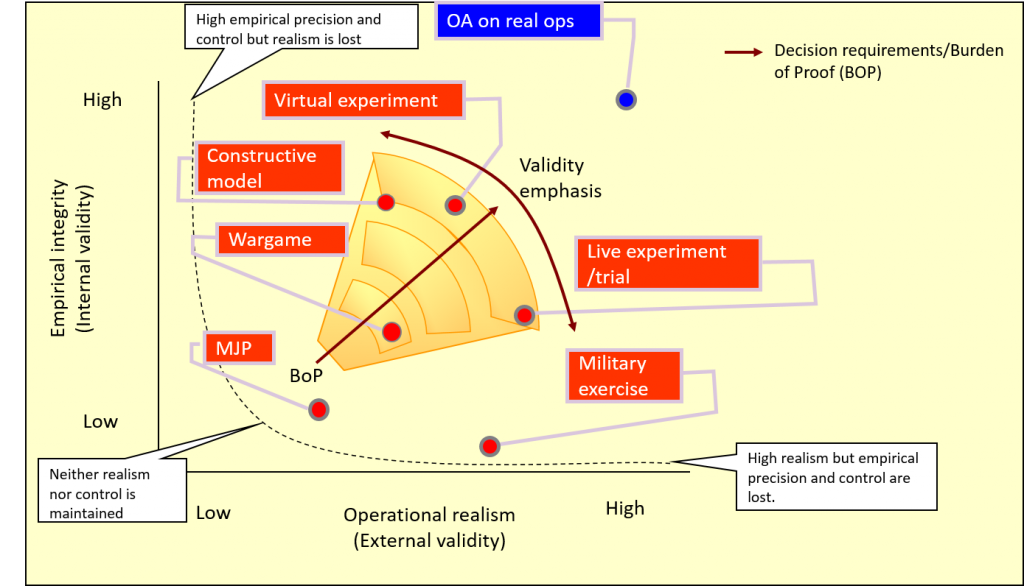

We didn’t need to drop below the line in David’s project. However, a subsequent experiment concerned generating the Concept of Employment (CONEMP) for the UK’s new SCOUT reconnaissance vehicle. This vehicle does not yet exist; everything is theoretical. Wargaming supported by manual simulation was again adopted in the preliminary stages of the experiment as a precursor activity for A&E activities supported by constructive, virtual and live computer simulations. The manual simulation-supported wargame was itself preceded by Military Judgement Panels (MJPs) and simple MAPEXes. The progression is shown in one of David’s diagrams, below.

.

Experiment Design Space, courtesy of Niteworks

.

No question this time that we were working below the ‘aggregated vs tactical weeds modelling line’: our SMEs were C Sqn of the Royal Lancers (RL), and we would be working with the Officer Commanding, 2ic, Troop Commanders and sergeants through to the gunners in individual vehicles. The RL (and other Army recce units) dismounted recce capability is generally provided by 4-man OPs and the RL are also expected to dismount and operate as conventional infantry in 4-man fire teams. Hence our basic FEs were individual vehicle platforms and 4-man fire teams, often operating crew-served weapons such as the Javelin ATGW.

.

I was again initially concerned that manual simulation would not provide the fidelity to work in the ‘tactical weeds’ space – a view echoed by Tom Mouat at the Connections UK 2015 ‘101’ who, quite rightly, questioned the ability of manual sims to cope with, by way of example, determination of detailed LOS from a map. Three months ago and I would have agreed, but we got on and developed a manual sim approach designed for use below the aggregated modelling line – and it worked! Aspects you might find interesting/of use are discussed below.

.

Design approach. This was:

.

- Confirm that the aim of the wargame supported the experimental objectives.

- Identify the detailed focus areas, derived from the Data Collection and Management Plan (DCMP).

- Determine how each focus area would be evaluated and analysed.

- Identify the metrics to be gathered to measure and gauge evaluation (including how to achieve data capture).

- Identify the scenario(s), vignettes and ‘deep dives’ required to support the analysis.

- Identify the people required to ensure validity of the analysis.

- Agree the key assumptions with capability owners.

- Design the processes required to achieve all of the above.

- Develop the required tools (OA, tables).

- Document all work, assumptions, decisions, outcomes and analysis.

.

Deconstructed computer simulation. The fundamental approach we adopted was that of a ‘deconstructed computer sim’, be that constructive or virtual. In common with most computer sims that model at the platform level, we determined the percentage chance (p) of: detect; hit; then kill (more precisely, effect). We based these on the User Requirement Document (URD) plus manufacturer’s data where possible, and interpolated (filling in the gaps between known data points) and extrapolated (estimating beyond the known data range) using SME judgement. I refer to this as ‘URD (+)’ hereafter. We derived a series of tables for every vehicle and FE type in the scenario, correlating these against every opposing vehicle and FE type at various ranges, in different terrain types and with various positive or negative modifiers; a serious and lengthy undertaking even with just three or four vehicle types per side. We then presented these URD (+) figures transparently to the C Sqn RL SMEs during the wargame for their comments. This both enabled the wargame and identified important areas for subsequent examination. The detailed process was:

.

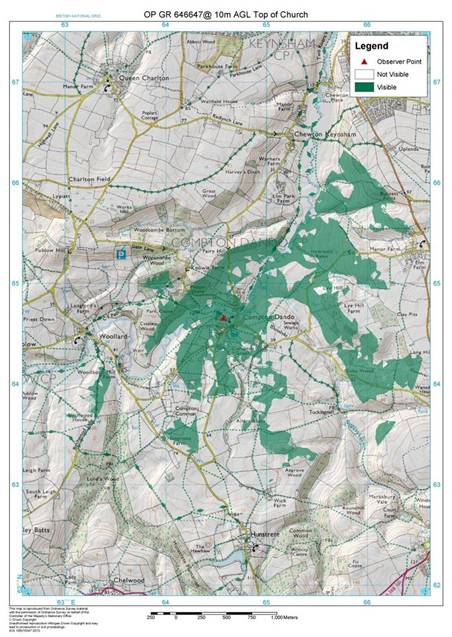

- LOS determination. This was accomplished using good old fashioned map reading skills, with a ‘squad average’ consensus based on an appreciation of the map and accompanying photographs of the actual terrain. We were quite bullish about this approach as all agreed that basic map reading skills and an understanding of the ground remain a key requirement – especially for recce soldiers. We also produced Intervisibility studies for a number of key (fixed enemy) positions to inform this process; see the example below. If necessary, the Niteworks Game Controller adjudicated according to the needs of the experiment and noted assumptions. Most computer sims will produce a LOS ‘fan’ or similar, but these are usually based solely on contours and take no account of vegetation; a serious limitation that can lead to false lessons. Our ideal was to combine the wargame with an actual visit to the ground (a Tactical Exercise Without Troops, or TEWT); the insights generated by comparing the map-based wargame, the anticipated URD (+) and the reality of what you could, and could not, see and do on the actual ground were startling.

- p (detect). We were ready to differentiate between ‘Detect – Recognise – Identify’ (DRI) but, given the high intensity warfighting nature of the scenario, didn’t need to. This DRI determination is probably best done during the virtual experiment phase and then live trials. We simply explained the URD (+) p (detect) to the SMEs and adjudicated or rolled percentage dice (d%) to determine the outcome.

- p (hit). Again, we simply and transparently presented the URD (+) figure for discussion, then rolled d% against the agreed number. An important consideration was the difference between the technically possible rate of fire and the achievable rate of fire given fleeting targets, observation constraints etc. We relied on SME judgement and noted queries arising for examination during the virtual and live trials stages.

- p (kill). Again, we were prepared to differentiate between ‘M’ (mobility), ‘F’ (firepower) and ‘K’ (destroyed) vehicle kills, but found that we didn’t need to and agreed that this detail is better considered in the subsequent stages of the experiment. The p (effect) on dismounted FEs, crew-served weapons (and some vehicles) was interesting. The URD stated ‘chance to defeat’, which we found unhelpful at this platform level, so we classified effects as: suppressed; neutralised; or destroyed.

Intervisibility study used to augment map-based LOS determination

.

Wargaming as scoping activity. As alluded to above, many of the wargame outputs highlighted areas for subsequent investigation during the virtual and/or live trials phase of the experiment. A dozen or so people wargaming for a couple of days in the early stages of an experiment provides focus for subsequent activities and saves significant resources downstream in the – far more expensive – constructive, virtual and live simulation phases.

.

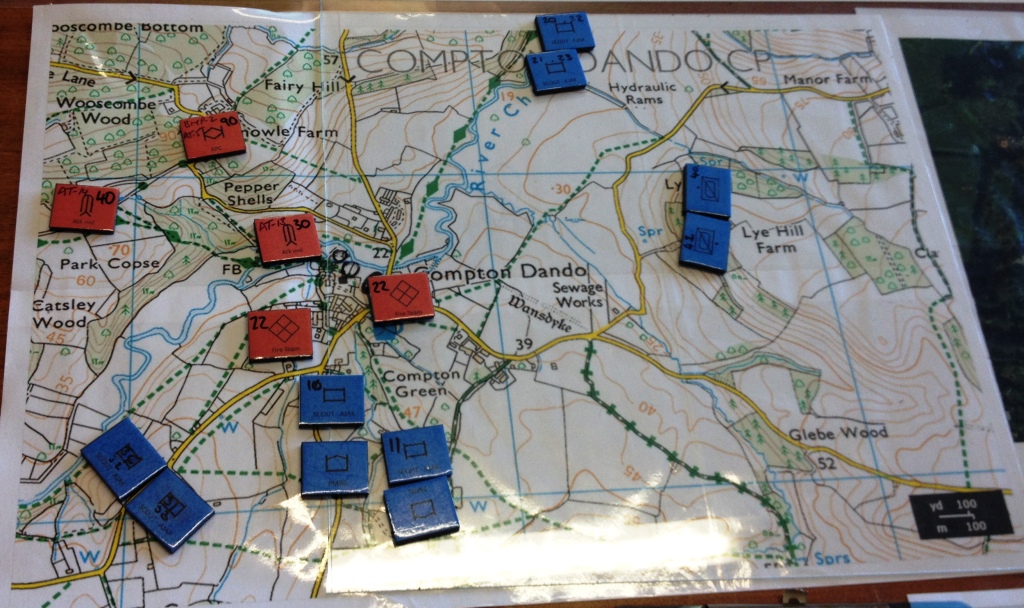

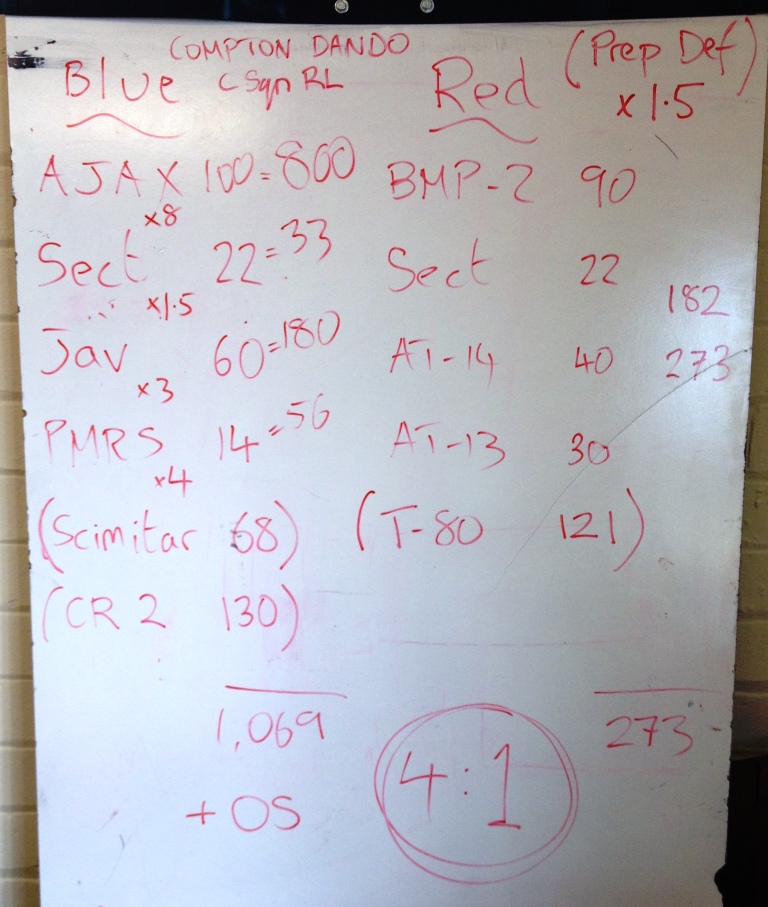

Combining manual simulation with other methods. Although I said earlier that using force ratios below the ‘aggregated modelling line’ can be problematic, we still used the technique in a complementary approach. The RL also use force ratios in their planning, so we thought it sensible to do likewise. The three illustrations below show: an expanded 1:25,000 map and counters, each with a combat factor noted on it; a quick white-board calculation of force ratios for a raid; and the preceding Close Target Reconnaissance (CTR), examined using a variation of matrix gaming. Note on the white board photo that factors shown in brackets were used only for comparative purposes against other platforms and form no part of the final calculation.

Expanded 1:25,000 map and counters showing detail of a raid

Rapid white board calculation of force ratios

‘4-box method’ derived from matrix gaming techniques, in this instance examining the factors leading to the success (or not) of a CTR

.

Informing military judgement. All of the above methods do no more than prompt discussion and help inform military judgement, in this case the C Sqn RL SMEs. It is their views that the wargame was designed to elicit, and a dedicated scribe noted their observations, insights and lessons identified. As discussed, many of these were carried forward into, and provided focus for, subsequent phases of the experiment.

.

Transparency and Red Teaming. All calculations, determinations and adjudications were conducted entirely transparently so that everyone understood all data and assumptions. Furthermore, the SMEs were requested to challenge any and all assumptions, even – especially – their own. This Red Teaming played a key role in identifying aspects of the CONEMP that needed further examination.

.

Lessons identified

.

A number of lessons identified are worth re-emphasising:

.

- Manual simulation works in the ‘tactical weeds’ space below the ‘aggregated modelling line’.

- Manual simulation-supported wargaming conducted as a scoping activity for downstream computer simulation-supported activities and live trials adds focus and saves significant time and money.

- The A&E process is iterative, with initial findings needing to be fed back into the process for subsequent (re-)consideration.

- The ‘deconstructed computer sim’ approach of LOS check – p (detect) – p (hit) – p (kill) worked well.

- Considering DRI, achievable rate of fire and replacing ‘kill’ with ‘effect’ increases validity.

- Transparency of data, its provenance and the process by which it is used is important.

- Red Teaming – challenging assumptions – is critical.

- No single approach satisfies all A&E goals; many types of simulation and different techniques should, where appropriate, be considered.

- Forcing consideration of LOS from an appreciation of the map reinforces the necessity of this fundamental skill.

- A TEWT adds significant value to the combination of map-based LOS determination and manual simulation, both to the analysis output and to participants’ training.